The way content is modelled is pivotal to the overall, technical design of a website, in particular, the choice of content management system.

Many bespoke CMSs are built using a relational database as the content repository. Most generic, off-the-shelf CMSs now use NoSQL technology, specifically document stores, to provide the necessary flexibility of schema design. Aside from these two, key content storage technologies, a small number of CMSs, usually hosted services, make direct use of triple stores: content repositories that use linked data technology.

Each type of content repository has advantages and disadvantages:

- Relational database: Supported by a wealth of mature technology and offers relational integrity. However, schema change can be slow and complex.

- Document store: Provides schema flexibility, horizontal scalability and content ‘atomicity’ (the ability to handle content in discreet chunks, for versioning or workflow). However, referential integrity is hard to maintain and queries across different sets of data are harder to achieve.

- Triple store: Provides logical inference and a fundamental ability to link concepts across domains. However, the technology is less mature.

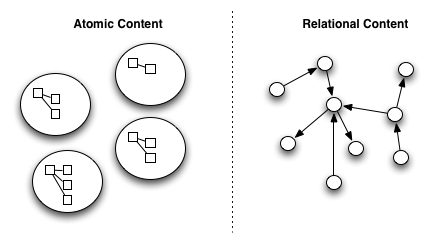

I think there are two diametric content modelling approaches; most approaches will fit somewhere, on a sliding scale, between the two extremes. Each exhibits properties that make some things simple, and some things complex:

- Atomic content: Content is modelled as a disconnected set of content ‘atoms’ which have no inter-relationships. Within each atom is a nugget of rich, hierarchically structured content. The following content management features are easier to implement if content is modelled atomically:

- Versioning

- Publishing

- Workflow

- Access control

- Schema flexibility

- Relational content: Content is modelled as a graph of small content fragments with a rich and varied set of relationships between the fragments. The following content management features are easier to implement if content is modelled relationally:

- Logical inference

- Relationship validation/integrity

- Schema standardisation

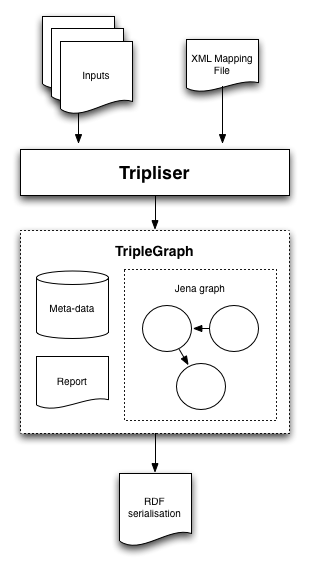

The following diagram indicates where the different content repositories fit on this scale:

For the scenario outlined below, two ‘advanced’ features have been used to highlight tension between atomic and related content models. The versioning feature causes problems for more related models, and the tag relationships feature causes problems for more atomic models.

The online film shop

A use-case to explain why linked data technology can help to build rich, content-driven websites

An online film shop needs to be built. It will provide compellingly presented information about films, and allow users to purchase films and watch them in the browser.

Here are some (very) high-level user-stories:

“As a user I want to shop for films at, http://www.onlinecinemashop.com, and watch them online after purchase”

“As an editor I need to manage the list of available films, providing enough information to support a compelling user experience.

An analysis indicates that the following content is needed:

- Films

- The digital movie asset

- Description (rich-text with screen-grabs & clips)

- People involved (actors, directors)

- Tags (horror, comedy, prize-winning, etc…)

Some basic functionality has been developed already…

- A page for each film

- A list of participants, ordered by role

- A page for each person

- A list of the films they have participated in

- An A-Z film list

- A shopping basket and check-out form

- A login-protected viewing page for each film

But now, the following advanced functionality is needed…

- Feature 1, Versioned film information: This supports several features including:

- Rolling-back content if mistakes are made

- Allowing editors to set up new versions for publishing at a later date, whilst keeping the current version live

- Providing a workflow, allowing approval of a film’s information, whilst continuing to edit a draft version

- Feature 2, Tagged content: Tags are used to express domain concepts, these concepts have different types of relationships which will support:

- Rich navigation, e.g. “This director also worked with…”

- Automated, intelligent aggregation pages, e.g. An aggregation page for ‘comedy’ films, including all sub-genres like ‘slapstick’

Using a relational database

Using a relational database, a good place to start is by modelling the entities and relationships…

Entities: Film, Person, Role, Term

Relationships: Participant [Person, Role, Film], Tag [Term, Film]

Assuming that the basic feature set has been implemented, the advanced features are now required:

Advanced feature 1: Versioned films

Trying to version data in a relational database is not easy: often a ‘parent’ entity is needed for all foreign keys to reference, with ‘child’ entities for the different versions, each annotated with version information. Handling versioning for more than one entity and the schema starts getting complicated.

Advanced feature 2: Tag relationships

When tagging content, one starts by trying to come up with a single, simple list of terms that collectively describe the concepts in the domain. Quickly, that starts to break down. Firstly a 2-level hierarchy is identified; for example, putting each tag into a category: ‘Horror: Genre’, ‘Paris: Location’, and so on. Next, a multi-level hierarchy is discovered: ‘Montmatre: Paris: France: Europe’. Finally, this breaks down as concepts start to overlap and repeat. Here are a few examples: Basque Country (France & Spain), Arnold Schwarzenegger (Actor, Politician, Businessman), things get even trickier with Bruce Dickinson. Basically, real-world concepts do not fall easily into a taxonomy, and instead form a graph.

Getting the relationships right is important for the film shop. If an editor tags a film as ‘slapstick’, they would expect it to appear on the ‘comedy’ aggregation page. Likewise, if an editor tagged a film as ‘Cannes Palme d’Or 2010 Winner’ they would expect it to be shown on the ‘2010 Cannes Nominees’ aggregation page. These two ‘inferences’ use different relationships, but achieve the correct result for the user.

With a relational database 2-level hierarchies introduce complexity, multi-level hierarchies more so, and once the schema is used to model a graph, the benefits of a relational schema have been lost.

Relational database: Advanced features summary

- Feature 1 is complex

- Feature 2 is complex

Using a document store

NoSQL storage solutions are currently very popular, offering massive scalability and a chance to break-free from strict schema and normal-forms. From a document store perspective. the original entities look very different, compiled into a single structure:

<film>

<name>Pulp Fiction</name>

<description>The lives of two mob hit men...</description>

<participants>

<participant role="director">Quentin Tarantino</participant>

<participant role="actor">Bruce Willis</participant>

...

</participants>

<tags>

<tag>Palme d'Or Winner 1994 Cannes Film Festival</tag>

<tag>Black comedy</tag>

<tag>Neo-noir</tag>

...

</tags>

</film>

The lists of available tags or participants may be handled using separate documents, or possibly ‘dictionaries’ of allowed terms.

Assuming again that the basic feature set has been implemented, the advanced features are now required:

Advanced feature 1: Versioned films

Keep multiple copies the film document, each marked to indicate the different versions. All the references are inside the document, so even the tags and roles are versioned too. Many off-the-shelf CMS products offer built-in document versioning based on this process.

Advanced feature 2: Tag relationships

This is even worse now. Before, with a relational database, we could keep adding more structures, using SQL queries to extract the hierarchical relationships. It was only when moving to graphs that relationships became too complex to manage. Without SQL to query a structure of tags, bespoke plug-in code is required to extract meaning from a bespoke hierarchy of terms. This is now too complicated to build anything simple and reusable.

Document store: Advanced features summary

- Feature 1 is simple

- Feature 2 is very complex

Using a triple store

In triple stores, all the content is reduced to triples. So all our content would look something like this…

http://www.imdb.com/title/tt0450345/ -> dc:title -> “The Wicker Man”

Assuming again that the basic feature set has been implemented, the advanced features are now required:

Advanced feature 1: Versioned films

Versioning triples would not be straightforward. A complex overlaid system of ‘named graphs’ could be used to separate out content items into different versions, but this would be more complex than for relational databases.

Advanced feature 2: Tag relationships

This is simple! SPARQL queries will offer us all the inferred or aggregated content that we require, as long as the ontology has been created, and curated, with care.

Triple store: Advanced features summary

- Feature 1 is very complex

- Feature 2 is simple

Why a single content model can’t solve all your problems

In the introduction I explained how each of the different content repositories has advantages and disadvantages. It is possible to model most domains, entirely, in either relational databases, document stores or triple stores. However, as the examples above show, some features are too complicated to implement for some content repositories. The solution, in my opinion, is to use different content repositories, depending on what you need to do, even with the same domain.

As an analogy, serving a search engine from a relational database is not a good idea, as the content model is not well tuned to free-text searching. Instead a separate search engine would be created to serve the specific functionality, where relational content is flattened to produce a number of search indices, allowing fast, free-text search capabilities. As this example shows, for a particular problem, content must be modelled in an appropriate way.

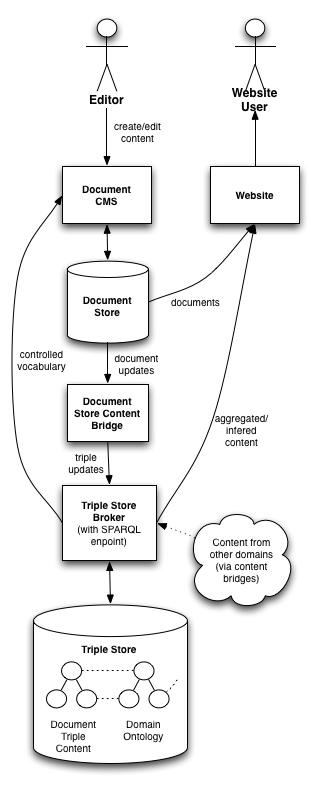

Using a document store + triple store

For this solution, document content is converted into triples by means of a set of rules. These rules will be processed by means of a ‘bridge’ which will convert the change stream provided by the document store, such as Atom, into RDF or SPARQL. In this way, a triple store will contain a copy of the document data in the form of triples (with a small degree of latency due to the conversion process). The system now provides a document store for fast, scalable access to the full content, and a SPARQL endpoint providing all the inference and aggregation that is needed.

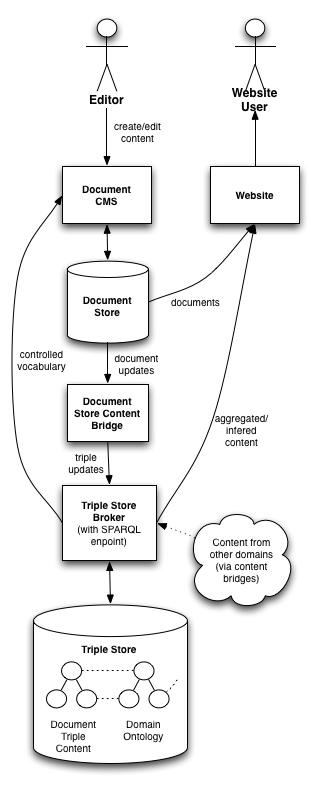

The following diagram represents the architecture used in this solution. It combines a document store, and a triple store, to provide both atomic content management and relational structures and inferences:

Continuing with the advanced feature implementation…

Advanced feature 1: Versioned films

See ‘document stores’.

Advanced feature 2: Tag relationships

See ‘triple stores’

Document store + triple store: Advanced features summary

- Feature 1 is simple

- Feature 2 is simple

By the leveraging the innate advantages of two different content models, all the advanced features are simple to achieve!

Cross-domain aggregation…

What seems interesting about this proposed solution, is that it involves the use of semantic web technologies without publishing any data interchange formats on the web. It would, however, seem a shame to stop short of publishing linked data. I would advocate that this approach is additionally used as a platform to support the publication of linked data, where the overhead of doing so, through the use of a triple store, is significantly reduced.

Combining this approach with ‘open linked data’ and SPARQL endpoints would demonstrate the power of modelling content as triples: expressing relationships in a reusable, standardised way, allows content to be aggregated within, or between, systems, ecosystems, or organisations.

Q&A

If a relational database is not used for content, how can data integrity be maintained?

In short, it is not. Integrity is applied at the point of entry through form validation and referential look-ups. Integrity then becomes the responsibility of systems higher up the chain (for example, the code that renders the page: if an actor doesn’t exist for a film, do not show them). If data integrity really is needed (for example, financial or user data), maybe an SQL database is a better choice; it is still possible to bridge content into the triple store if it is needed.

How is the ontology data managed?

This is a tricky one, and it really depends….I think there are three choices:

- Curate the domain ontology manually: Editing and importing RDF/turtle. Needs people with the relevant skills. Could be ideal for small, stable ontologies

- Use a linked data wiki: Some tools and frameworks exist for building semantic wikis (e.g. Ontowiki). This could be too generic an approach for some, more complex, ontologies.

- Use a relational database CMS + data bridge: Despite the drawbacks of relational databases for content, it may still be a practical solution for ontological data. By building a CMS on a relational database we get all the mature database technology and data integrity, only leaving the tricky process of bridging the ontology data into a triple store.

And finally…

Some of the ideas in this post are based on projects happening at the BBC, many thanks in particular to the BBC News & Knowledge team for sharing their recent endeavours into semantic web technologies.